Your website just launched. It looks great. The client signs off. Then a customer tries to check out on their phone, the payment form breaks, and nobody knew until the support tickets started rolling in.

This scenario plays out constantly — and it’s getting worse. Websites are more complex than ever. More devices, more browsers, more user paths. Traditional testing struggles to keep up. AI-powered testing is changing that equation, and it’s worth understanding what that means for your next web project.

TL;DR

AI-powered testing tools help catch bugs faster, cover more scenarios, and reduce the manual effort required for quality assurance. They don’t replace human testers — they amplify what experienced QA teams can accomplish by automating the repetitive work. For business owners, this means fewer post-launch surprises, faster development cycles, and better confidence that your website works correctly across every device and browser your customers actually use. The investment in smarter testing processes pays for itself in avoided downtime and preserved customer trust.

Why traditional testing falls short

Manual testing has always been the backbone of quality assurance. A QA team clicks through pages, fills out forms, tests on different browsers, and reports what breaks. It works — but it has some fundamental limitations.

Coverage gaps are inevitable. A typical business website has hundreds of possible user paths. Factor in different browsers, screen sizes, and device types, and the number of combinations becomes enormous. No manual testing team can cover every scenario every time.

Regression testing is repetitive and slow. Every time developers change something, QA needs to retest everything that might be affected. The World Quality Report 2023-24 highlights that organizations are investing heavily in QE lifecycle automation specifically because manual regression cycles can’t keep pace with modern development speed.

Human attention fades. After the fiftieth time checking the same form on the same page, even the best tester can miss subtle issues. Visual differences between browsers, slight layout shifts on mobile, or form validation edge cases slip through because human attention has limits.

These aren’t theoretical problems. Industry analysis shows that organizations are increasingly recognizing the gap between development speed and testing capacity — and AI is emerging as the bridge between the two.

What AI brings to testing

AI doesn’t replace the testing process. It makes specific parts of it dramatically more effective.

Automated test generation. AI tools can analyze your website’s structure and automatically generate test cases that cover common user flows. Instead of a QA team manually writing test scripts for every button, form, and navigation path, AI creates a baseline of tests that covers the most critical scenarios. Industry data shows that test case generation is the most common application of AI in testing today.

Visual regression detection. AI-powered visual testing tools compare screenshots of your website across browsers and devices, flagging differences that might indicate a bug. A button that shifts three pixels on Safari, a font that renders differently on Android, a layout that breaks at a specific screen width — AI catches these visual discrepancies with a consistency that manual review simply can’t match.

Self-healing tests. One of the biggest headaches in traditional automated testing is maintenance. When developers update a page, existing test scripts break because they’re looking for elements that moved or changed. AI-powered tests can adapt to minor changes automatically, reducing the maintenance burden significantly. Organizations using AI testing tools report up to 85 percent lower test maintenance costs.

Smarter prioritization. AI analyzes which parts of your codebase changed and which tests are most likely to catch problems, then runs those tests first. Instead of running every test every time, AI focuses testing effort where it matters most — giving you faster feedback on the changes that carry the highest risk.

What this means for your website

If you’re commissioning a web project, AI-powered testing translates to several concrete benefits:

Fewer post-launch issues. Higher test coverage means more bugs caught before they reach your customers. The combination of automated test generation and visual regression detection covers scenarios that manual testing routinely misses.

Faster development cycles. When testing is faster and more automated, development teams can iterate more quickly without sacrificing quality. As we discussed in our article about how AI speeds up development, the goal isn’t just speed — it’s maintaining quality at higher velocity.

Better cross-device consistency. AI testing tools systematically verify your website across dozens of browser and device combinations. Your customers get a consistent experience whether they’re on Chrome, Safari, Firefox, or a mobile browser — without your QA team manually checking each one.

Lower long-term testing costs. While the initial investment in AI testing tools requires budget, organizations report 30 to 40 percent reductions in overall QA costs as automated testing handles the repetitive work. We covered how to think about these investments in our AI development budgeting guide.

Confidence at launch. Perhaps most importantly, AI-powered testing gives you and your team greater confidence when pushing updates live. Instead of hoping nothing broke, you have systematic verification that your critical user paths still work. For e-commerce sites, lead generation forms, or any website where downtime costs real money, that confidence has measurable business value.

Where human testers still matter

AI testing excels at the repetitive, systematic work. But some aspects of quality assurance require human judgment that AI can’t replicate.

Usability testing needs real people. Does the checkout flow feel intuitive? Is the navigation confusing? Are error messages helpful? These questions require human empathy and understanding of user behavior.

Business logic validation is contextual. AI can verify that a form submits correctly, but it can’t judge whether the workflow makes sense for your specific business process. Human testers who understand your business catch issues that purely technical testing misses.

Accessibility requires human review. Automated tools catch many accessibility issues — missing alt text, insufficient color contrast, keyboard navigation gaps. But a full accessibility audit requires someone using assistive technology to evaluate the actual experience. Automated scans are a starting point, not a finish line. True accessibility compliance needs human evaluators who understand how real users with disabilities interact with your website.

The best QA teams combine AI tools with experienced human testers. As we explored in our article about why team experience matters, the technology amplifies human skill — it doesn’t replace it.

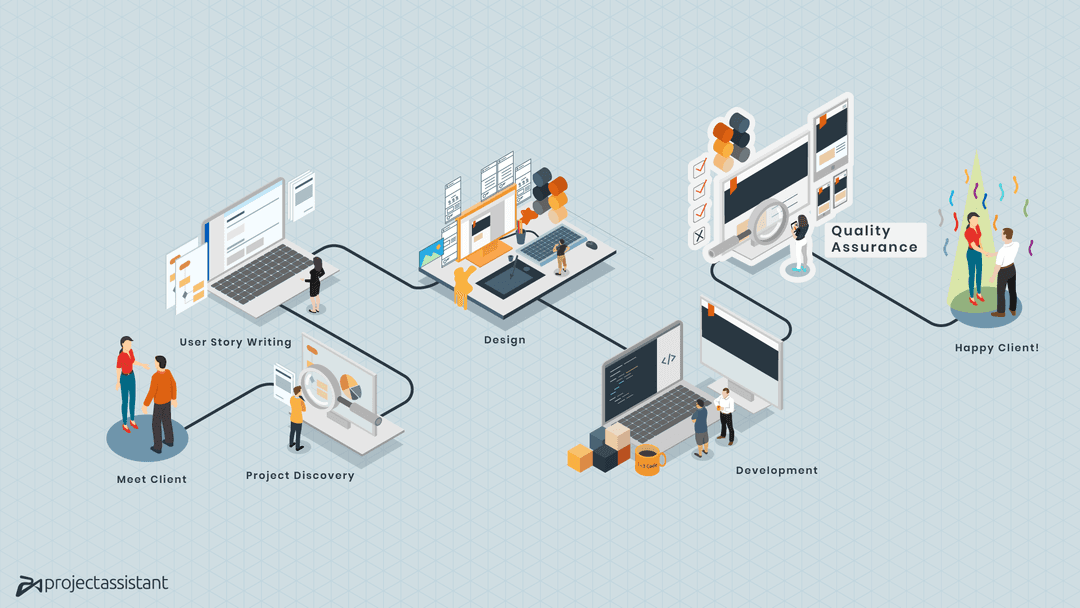

How to evaluate testing in your web project

When evaluating a development agency’s testing approach, look for these indicators:

Automated testing is part of the workflow, not an afterthought. Tests should run automatically as part of the development process — not manually triggered the week before launch.

Cross-browser and cross-device testing is systematic. Ask which browsers and devices are included in the testing matrix. A good agency tests against the browsers your customers actually use.

Regression testing is automated. Every code change should trigger automated tests that verify nothing broke. Manual-only regression testing is a red flag at modern development pace.

The team explains their QA process clearly. Agencies that invest in quality can describe their testing process in detail. If you need help framing these conversations, our guide to questions to ask your web agency covers what to look for.

Frequently asked questions

Do I need to budget separately for AI testing tools?

Usually, no. AI testing tools are typically part of the development agency’s infrastructure — similar to how they provide version control and deployment tools. The cost is factored into their development process, not billed separately. If you want to understand the full cost picture, our budgeting guide breaks it down.

Will AI testing make my project launch faster?

It can. Faster testing cycles mean developers get feedback sooner, which reduces the back-and-forth between development and QA. The biggest time savings come from automated regression testing — verifying that new changes don’t break existing functionality.

Can AI testing guarantee my website will be bug-free?

No testing approach — AI or human — can guarantee zero bugs. What AI testing does is dramatically increase the likelihood of catching issues before they reach your customers. It’s about reducing risk, not eliminating it entirely.

How does AI testing relate to the AI coding tools discussed earlier in this series?

They’re complementary. AI coding assistants help developers write code faster, while AI testing tools help verify that code works correctly. As we discussed in our article about how we use AI in practice, faster code production requires proportionally stronger quality processes.

The bottom line: quality scales with the right tools

AI-powered testing isn’t about replacing your QA team. It’s about giving that team superpowers — the ability to test more thoroughly, catch issues faster, and maintain quality even as development velocity increases.

For business owners, the takeaway is simple: ask your development partner about their testing process. The agencies delivering the most reliable websites in 2024 are the ones that invested in smarter quality assurance alongside smarter development tools.

Get in touch with our quality assurance team to learn how we build testing into every stage of your web project.